HGST will be demonstrating its breakthrough persistent memory fabric at the Flash Memory Summit 2015, Santa Clara, Ca., over the next couple of days. The firm's Phase Change Memory (PCM) based tech is expected to deliver DRAM-like performance at a lower cost of ownership and with greater scalability, enabling the growth of in-memory computing.

Some see in-memory computing as a cornerstone of data centre processing in the future. Currently the adoption of in-memory computing is held back by technical issues with DRAM. HGST claims that current data centres consume 20 to 30 per cent of their power budget due to the DRAM employed. Its non-volatile PCM doesn't require powered refresh and can thus be scaled, yet still offer users DRAM-like performance.

HGST outlines a network-based approach where applications access non-volatile PCM across multiple computers to scale out as needed. The system uses the Remote Direct Memory Access (RDMA) protocol over networking infrastructures, such as Ethernet or InfiniBand and it is said to be reliable, scalable, low-power and can be deployed without BIOS modification nor rewriting of applications.

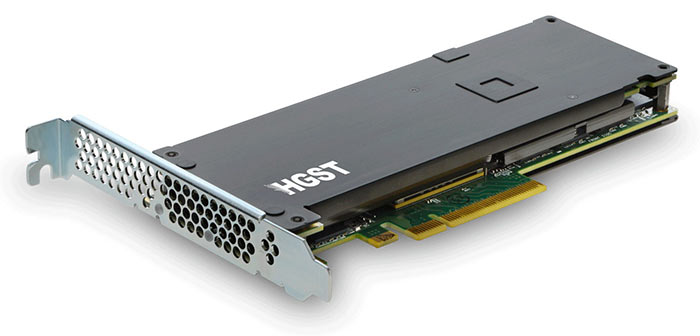

Last year HGST demonstrated its PCM PCIe SSD, pictured above, delivering a record-breaking three million IOPS. It says that, in collaboration with Infiniband solutions company Mellanox, it can offer random access latency below 2ms for 512 byte reads and throughput exceeding 3.5GB/s for 2KB block sizes using RDMA over InfiniBand.

"DRAM is expensive and consumes significant power, but today's alternatives lack sufficient density and are too slow to be a viable replacement," said Steve Campbell, HGST’s chief technology officer. "Last year our Research arm demonstrated Phase Change Memory as a viable DRAM performance alternative at a new price and capacity tier bridging main memory and persistent storage. To scale out this level of performance across the data centre requires further innovation. Our work with Mellanox proves that non-volatile main memory can be mapped across a network with latencies that fit inside the performance envelope of in-memory compute applications."

HGST revealed that it makes the best of low latency PCM over a network by applying PCI Express Peer-to-Peer technology to create low latency storage fabric.

As mentioned above, the HGST phase-change SSD was first seen at the Flash Memory Summit a year ago. Hopefully this new demonstration of PCM in an in-memory computing system will mean it is closer to market. The 2015 Flash Memory Summit begins tomorrow. I will update this post with any new breakthrough information from the event, concerning HGST's persistent memory fabric.