The GeForce GTX 970 supposed memory issue

You may have read about the recent Internet furore surrounding the GeForce GTX 970, a GPU that has sold by the proverbial buckletloads since launch in September 2014.

This furore picks up on the fact that the GeForce GTX 970's memory-bandwidth performance dives when more than 3.5GB out of the possible 4GB is used, and, crucially, this is a situation that doesn't arise with the seemingly architecturally-similar GeForce GTX 980.

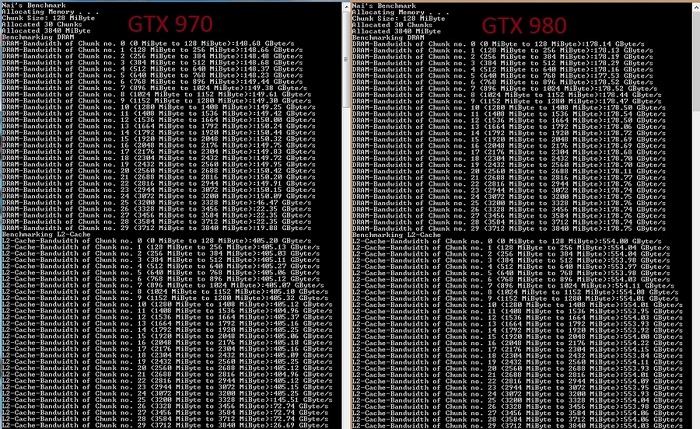

Digging deeper and putting some numbers on these bones, the folks over at Lazygamer.net have shown that after approximately 3.3GB of usage the memory bandwidth of the GTX 970 tails off magnificently, from 150GB/s down to as low as 20GB/s - see the below image for more details.

Image credit: Lazygamer

The 'bug' has limited implications for the vast majority of users, granted, but readers interested in 4K gaming or using the new Dynamic Super Resolution (DSR) down-sampling technique may encounter problems.

The first NVIDIA response

Nvidia has since stepped into this supposed memory-bandwidth issue by issuing the following reply:

"The GeForce GTX 970 is equipped with 4GB of dedicated graphics memory. However the 970 has a different configuration of SMs than the 980, and fewer crossbar resources to the memory system. To optimally manage memory traffic in this configuration, we segment graphics memory into a 3.5GB section and a 0.5GB section. The GPU has higher priority access to the 3.5GB section. When a game needs less than 3.5GB of video memory per draw command then it will only access the first partition, and 3rd party applications that measure memory usage will report 3.5GB of memory in use on GTX 970, but may report more for GTX 980 if there is more memory used by other commands. When a game requires more than 3.5GB of memory then we use both segments.

We understand there have been some questions about how the GTX 970 will perform when it accesses the 0.5GB memory segment. The best way to test that is to look at game performance. Compare a GTX 980 to a 970 on a game that uses less than 3.5GB. Then turn up the settings so the game needs more than 3.5GB and compare 980 and 970 performance again.

Here’s an example of some performance data:

| GTX 980 | GTX 970 | |

|---|---|---|

| Shadow of Mordor | ||

| <3.5GB setting = 2688x1512 Very High | 72 FPS | 60 FPS |

| >3.5GB setting = 3456x1944 | 55 FPS (-24%) | 45 FPS (-25%) |

| Battlefield 4 | ||

| <3.5GB setting = 3840x2160 2xMSAA | 36 FPS | 30 FPS |

| >3.5GB setting = 3840x2160 135% res | 19 FPS (-47%) | 15 FPS (-50%) |

| Call of Duty: Advanced Warfare | ||

| <3.5GB setting = 3840x2160 FSMAA T2x, Supersampling off | 82 FPS | 71 FPS |

| >3.5GB setting = 3840x2160 FSMAA T2x, Supersampling on | 48 FPS (-41%) | 40 FPS (-44%) |

On GTX 980, Shadows of Mordor drops about 24% on GTX 980 and 25% on GTX 970, a 1% difference. On Battlefield 4, the drop is 47% on GTX 980 and 50% on GTX 970, a 3% difference. On CoD: AW, the drop is 41% on GTX 980 and 44% on GTX 970, a 3% difference. As you can see, there is very little change in the performance of the GTX 970 relative to GTX 980 on these games when it is using the 0.5GB segment."

What's going on, then?

Nvidia's first response admits the GeForce GTX 970's memory subsystem is composed differently to the GTX 980's - with a primary, fast 3.5GB partition and a secondary 0.5GB partition accessed only when needed - but doesn't go on to provide any meaningful technical insight other than to say that in-game performance barely suffers. Such a statement left us unsatisfied.

Just a couple of hours ago HEXUS was invited to chat with Jonah Alben, senior vice president of GPU engineering at Nvidia, who provided a high-level overview of the new memory subsystem employed by the GeForce GTX 970.

Nvidia and AMD make engineering decisions on how best to harvest the full-fat architecture of a particular class of GPU. The GM204's premier interpretation is the GeForce GTX 980. The GTX 970, meanwhile, appears to keep most of the GTX 980's goodness intact, save for the disabling of three of the 16 SMM units, thus pushing the number of cores down from 2,048 to 1,664, texture units from 128 to 104, and so forth. We praised Nvidia for keeping the heavy-lifting back-end the same as GTX 980, meaning 64 ROPs and 2,048KB of L2 cache. This, Jonah revealed, is not the case.

GTX 970 is not what you first thought it was

It turns out that GeForce GTX 970 56 ROPs, not the 64 listed on reviewers guides back in September and such knowledge leads to interesting questions. Nvidia has clearly known about this inaccuracy for some time but has deigned not to contact the technical press with an update or explanation. Having fewer ROPs isn't a huge problem for the top-end of the GeForce GTX 970 - we'll explain why a little later on - but does have important ramifications for the memory subsystem, and answers the 3.5GB/0.5GB issue referred to above.

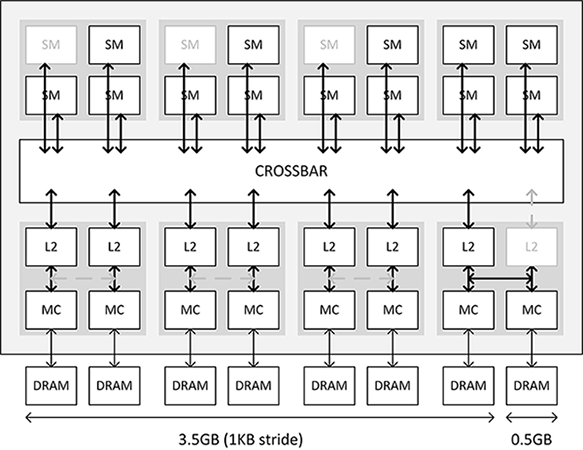

This Nvidia-provided slide gives brief insight into how the GTX 970 is constructed. The three disabled SMs are shown at the top and 256KB L2s and pairs of 32-bit memory controllers on the bottom. Notice the greyed-out right-hand L2 for this GPU? Tied into the ROPs as they are this is a direct consequence of reducing the overall ROP count. GTX 970 has 1,792KB of L2 cache, not 2,048KB, but, as Alben points out, still has a greater cache-to-SMM ratio than GTX 980.

Historically, including up to the Kepler generation, cutting off the L2/ROP portion would require the entire right-hand quad section to be deactivated too. Now, with Maxwell, Nvidia is able to use some smarts and still tap into the 64-bit memory controllers and associated DRAM even though the final L2 is missing/disabled. In other words, compared to previous generations, it can preserve more of the performance architecture even though a key part of a quad is purposely left out. This is good engineering.

But while it's still accurate to say the GeForce GTX 970 has a 256-bit bus through to a 4GB framebuffer - the memory controllers are all active, remember - cutting out some of the L2 but keeping all the MCs intact causes other problems; there is no usual eighth L2 to access, meaning that the seventh L2 will be hit twice. The way in which the L2 work makes this a very undesirable exercise, Alben explains, because this forces all other L2s to operate at half normal speed.

Smoke and mirrors

Finally coming back to point, Nvidia gets around this L2 problem by splitting the 4GB memory into a regular 3.5GB section, constituted by seven MCs and associated DRAM, and a 0.5GB section for the last memory controller. The company could have marketed the GeForce GTX 970 as a 3.5GB card, or even deactivated the entire right-hand quad and used a 192-bit memory interface allied to 3GB of memory but chose not to do so. The thinking behind such a move revolves around the last L2 cache/MC partition combo still being considerably faster than the card having to run out over the PCIe bus and to system memory as is the case when the onboard framebuffer of any card is saturated.

The real question is how does this play out with the huge memory bandwidth drop-off in the Lazygamer Nia test versus Nvidia's statement that games barely suffer from this smart engineering? The Lazygamer test at the >3.5GB metric simply probes bandwidth on a single DRAM, which is admittedly low, or 1/8th of the total speed, while in-game code, according to Nvidia, doesn't necessarily pinpoint memory in this way. There's certainly a memory-bandwidth drop-off when the 0.5GB section is called into action, Alben states, but it's not anywhere near as severe if infrequently accesssed code is shunted into the last two MCs and DRAM.

In a high-level nutshell Nvidia is using smart engineering to get the most out of the GTX 970's architecture. The lack of total ROPs is relatively unimportant because this GPU cannot make use of them - the 13 SM units, running at four pixels per clock (so 52 in total), are limiting the GPU more so than the 56 processed by the ROPs. The GeForce GTX 970's performance hasn't changed, obviously, but Nvidia wasn't clear on how the back-end works... and it has taken investigation by enthusiasts to uncover the real reason why this 256-bit architecture isn't as good as the GTX 980's.

Nvidia stung into action?

We've learned that the GeForce GTX 970 is more than an SMM-reduced version of the GTX 980, which is a fact that will come into play once that 0.5GB is routinely accessed. We've learned that Nvidia has chosen not to impart the GTX 970's differences until Internet rumours surfaced on a potential memory-bandwidth issue, and we have learned that Nvidia has known all along that the information passed along to reviewers - ROP counts, L2 cache, etc. - has been wrong... and has done nothing about it until forced to do so when speculation grew too rife.

There are hundreds of behind-the-scenes tweaks made to each iteration of a GPU architecture. Some of the more important ones, particularly with regards to high-level architecture, should be disclosed from the get-go. Nvidia chose not to with the highly-accomplished GeForce GTX 970, wrongly in our opinion, and while the under-the-hood tweaks don't materially change performance by any degree, Nvidia should have been very clear that the GeForce GTX 970 and GTX 980 use different back-end technologies, more so for buyers who can tax the main 3.5GB framebuffer when gaming at 4K.

What do you, the gamers, make of Nvidia's initial reluctance to share how the GeForce GTX 970 really works? Or do you even care, because performance hasn't changed one iota from yesterday to today?