A new high performance computing (HPC) cluster has been installed at Lawrence Livermore National Laboratory (LLNL) in California. The cluster, dubbed Mammoth, was designed in a collaboration between AMD, LLNL researchers, Supermicro and Cornelis Networks. Of particular note with regard to Mammoth is that while this cluster does have plenty of processing power its focus is on delivering 'Big Memory' computing capabilities. In other words it has much more memory installed than what one might expect of a typical HPC cluster of its size.

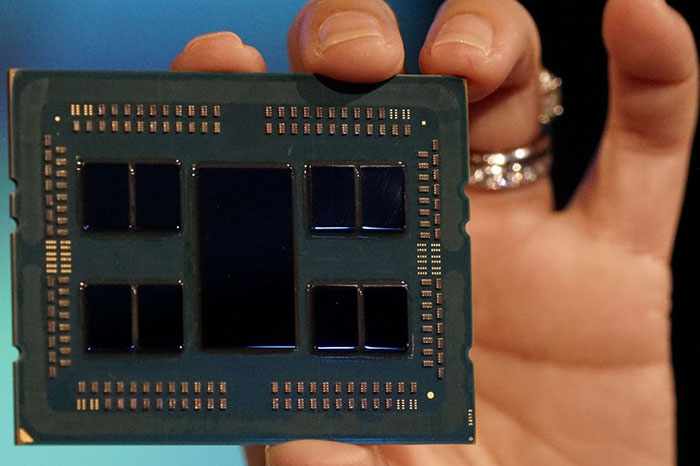

Mammoth has been constructed using 64 servers, with each server equipped with two AMD Epyc CPUs. Each Epyc CPU has 64 cores cable of processing 128 threads. Thus Mammoth has a total of 8,192 processor cores available for compute (15,384 threads). It has a peak performance of 294TFLOPS.

Moving onto that headlining memory configuration, the LLNL blog says that each server the Mammoth cluster packs in 2TB of DRAM and 4TB of non-volatile memory. The total memory available to the cluster is therefore 128TB DRAM, and 256TB of NVRAM. That is 'Big Memory'.

LLNL researchers think that the special qualities of Mammoth will offer computing efficiency which is optimised for data-intensive COVID-19 research and pandemic response. It will be used for "genomics analysis, nontraditional HPC simulations and graph analytics required by scientists working on COVID-19, including the development of antiviral drugs and designer antibodies," explains the LLNL blog.

The researchers have already been tasking Mammoth with analysing the genome of the SARS-CoV-2 virus. Mammoth is probing how the virus evolves and looking at mutation possibilities. It is reducing the time needed for genomic analysis "from a few days to a few hours," say scientists already working on the cluster. In an example of the value of the new cluster, the LLNL blog says that its Rosetta Flex software was memory limited on other HPC clusters to 12 or 16 simultaneous calculations per node. Mammoth can handle 128 Rosetta Flex calculations simultaneously on a single node.