The last ever ITRS (International Technology Roadmap for Semiconductors) roadmap has been published. An interesting prediction for the industry within the pages of the report, published earlier this month, is that "the transistor could stop shrinking in just five years," reports IEEE Spectrum. The ITRS is a US trade group which represents such IT giants as IBM and Intel.

According to the president of the Semiconductor Industry Association (SIA), John Neuffer, the roadmap "provides key findings about the future of semiconductor technology and serves as a useful bridge to the next wave of semiconductor research initiatives". As miniaturisation hits its limits the chip making industry is expected to turn further to 3D structures in transistors and circuits. It is noted that the memory industry has already gone big in 3D and monolithic 3D integration holds still lots of promise, as recent news of BeSang Inc's 3D Super-NAND shows.

Already process miniaturisation has started to become so difficult only a few highly successful companies have managed to hang on. IEE Spectrum says this difficulty has resulted in the 19 companies developing and manufacturing leading-edge transistors in 2001 diminishing to just four in 2016; Intel, TSMC, Samsung, and GlobalFoundries. This fiercely competitive quartet of companies doesn't want to work together with the ITRS - each has their own roadmaps now – that's why the latest ITRS roadmap will be the last.

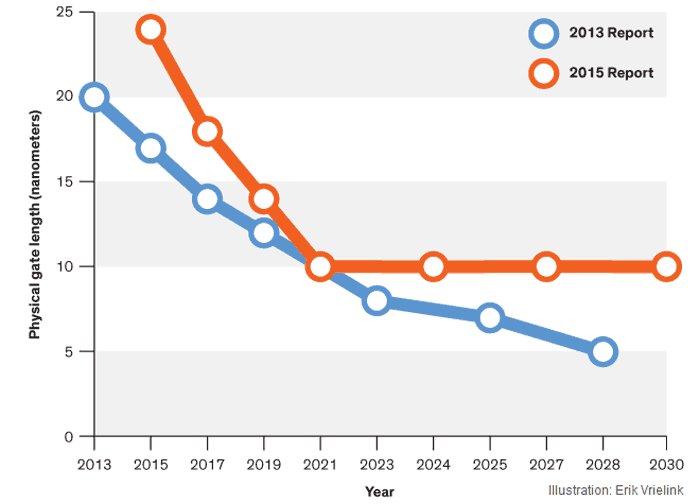

Moore's Law turned 50 in April 2015 but with this kind of roadblock in the way of miniaturisation it doesn't look to have such a long lifespan left if the industry relies only on miniaturisation to go forward. Slightly earlier last year the Law was predicted to could keep up trends until 7nm was reached, after that we are entering into the unknown for industry progress. Late last year the IRTS was looking at possible tech to keep Moore's Law on tack beyond process shrinkages and pushed forward ideas such as "new kinds of transistors and memory devices, neuromorphic computing, superconducting circuitry, and processors that use approximate instead of exact answers."