Various benchmarks for an Intel Gen11 GT2 GPU have surfaced on the internet. These GPUs are expected to appear in codename Ice Lake CPUs later this year. From leaks unearthed just ahead of the weekend it looks like Intel has pushed the envelope and its integrated graphics will give Nvidia GeForce MX and AMD APUs much stiffer competition.

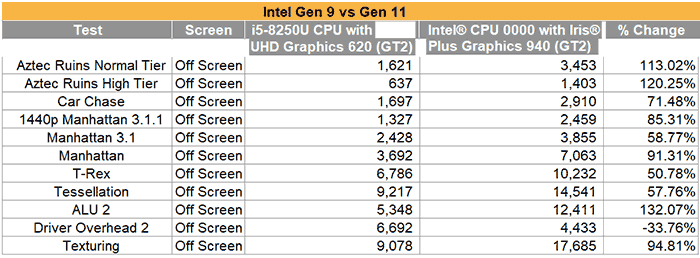

As well as GFX Bench and CompuBench scores, this Reddit thread provides some eyebrow raising comparisons of Intel Gen11 GT2 against Intel Gen9 GT2, and the Ryzen 2700U, and Ryzen 2400G. The charts speak for themselves but the Intel Gen11 GT2, or the Iris Plus Graphics 940, is significantly faster than the Gen9 Intel GPU, and the Ryzen 2700U with Vega 10. Compared against the Ryzen 2400G with Vega 11 graphics it is about 25 per cent slower on average in the given tests. As NoteBookCheck comments, Skylake generation GPUs offered 24 EUs at most, while the Ice Lake GPU will feature up to 64 EUs and a 4x larger L3 cache.

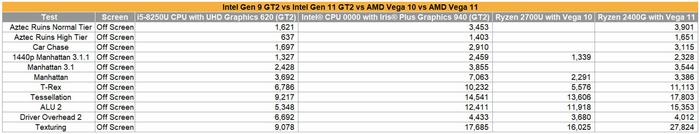

Intel UHD Graphics 620 vs Intel Iris Graphics 940

The generational divide, as charted above, is huge. On average the Gen11 GPU is about 75 per cent faster but falls down in comparison in just the Driver Overhead 2 test. Simply going by the name of this test, it might be something to do with the driver software not responding well to a new GPU.

Intel Iris Plus Graphics 940 vs AMD Vega 10 and Vega 11 (click to zoom image)

Above you see Intel Iris Plus Graphics 940 easily boss the Vega 10, and trade blows with an AMD APU featuring Vega 11 graphics.

Intel Gen11 graphics do more than just add performance, they will also herald the arrival of features such as DisplayPort 1.4a support, plus VESA DSC support for 5K 120Hz output. Gen11 will be the last generation before Gen12, upon which Intel's discrete GPUs will be based.

There is still quite some time until the first 'Sunny Cove' based Ice Lake CPUs will launch, and please remember to take leaked synthetic benchmarks with a pinch of salt - but the figures are encouraging for those looking to update a thin and light laptop late this year. Such strongly improved integrated graphics could help reduce pricing, power consumption and complexity compared to hybrid graphics solutions packing GeForce MX solutions, for example.