At the end of the AMD Epyc 'New Era in the Datacenter' presentation, CEO Dr Lisa Su came on stage to talk about GPUs. Of course the talk was about GPUs being used for machine intelligence and other IT data centre processing growth areas. With this established Dr Su launched the Radeon Instinct line of accelerators, starting with the MI25. Following the launch event AMD has uploaded its Radeon Instinct MI25 product pages providing an overview of performance, features, and specifications.

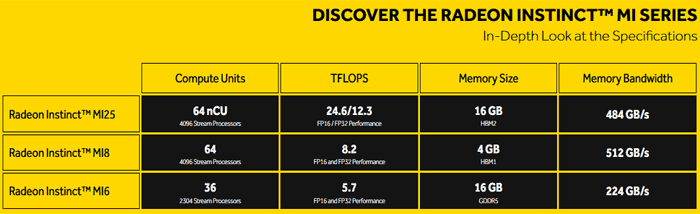

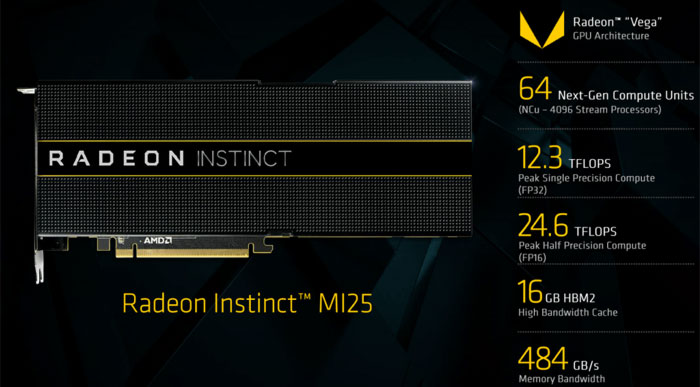

This accelerator is claimed by AMD to be the "world’s fastest training accelerator for machine intelligence and deep learning". Key performance specifications of the Radeon Instinct MI25 can be seen below in a screenshot from the press deck.

- GPU Architecture: 14nm FinFET AMD Vega10

- Stream Processors: 4,096

- GPU Memory: 16GB HBM2

- Memory Bandwidth: Up to 484 GB/s

- Performance: Half-Precision (FP16) 24.6 TFLOPS, Single-Precision (FP32) 12.3 TFLOPS, Double-Precision (FP64) 768 GFLOPS

- ECC: Yes

- MxGPU Capability: Yes

- Board Form Factor: Full-Height, Dual-Slot

- Length: 10.5”

- Thermal Solution: Passively Cooled

- Standard Max Power: 300W TDP

- OS Support: Linux 64-bit

- ROCm Software Platform: Yes

- Programming Environment: ISO C++, OpenCL™, CUDA (via AMD’s HIP conversion tool) and Python5 (via Anaconda’s NUMBA)

The Radeon Instinct MI25 wasn't the only member of the family unveiled. AMD also launched the Radeon Instinct MI8 and Radeon Instinct MI6 based upon its older GPU architectures. For example the MI8 is based upon a Fiji GPU and made for SFF HPC providing 8.2 TFLOPS of peak FP16|FP32 performance at less than 175W board power and 4GB of High-Bandwidth Memory (HBM) on a 512-bit memory interface. The MI6 is based upon a Polaris GPU with 5.7 TFLOPS of peak FP16|FP32 performance at 150W peak board power and 16GB of ultra-fast GDDR5 GPU memory on a 256-bit memory interface.