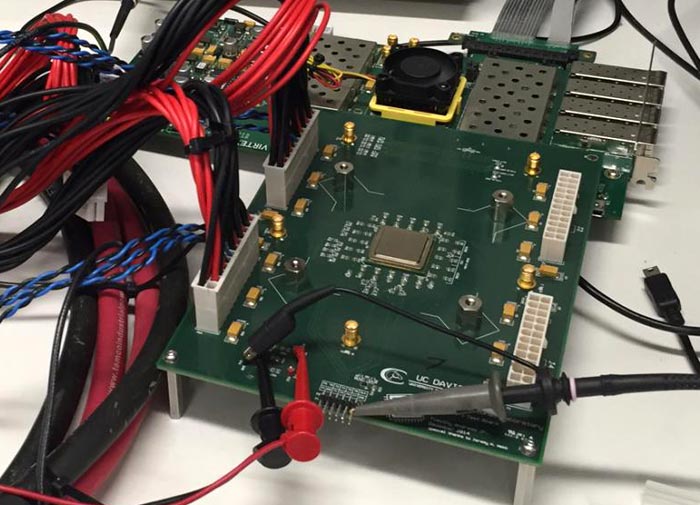

A team of researchers at the University of California, Davis, Department of Electrical and Computer Engineering have revealed an engineering sample of a 1000-core processor chip. Dubbed the KiloCore, this chip contains 621 million transistors, and is capable of executing 1.78 trillion instructions per second, yet is hugely power efficient and can be powered by a single AA battery.

The KiloCore chip was fabricated by IBM using 32nm CMOS technology. Previous multiple processor core chips have topped out at around 300 cores, says the team. However, it's not only the chip with the most cores ever, it is also "the highest clock-rate processor ever designed in a university," says research project leader Bevan Baas, professor of electrical and computer engineering.

The researchers say that "each processor core can run its own small program independently of the others," which is more flexible than a SIMD approach and results in fast parallel computation with high throughput and lower energy use. Improving energy use further is the ability to vary the clock and shut down each core independently. The maximum core clock frequency is 1.78GHz and at this speed the KiloCore can compute at 1.78 trillion instructions per second. Clocked lower, the researchers boast the KiloCore efficient enough to execute 115 billion instructions per second "while dissipating only 0.7 Watts, low enough to be powered by a single AA battery".

During the presentation of a working KiloCore sample, at the 2016 Symposium on VLSI Technology and Circuits in Honolulu late last week, we learnt that the researchers have readied a compiler and automatic program mapping tools for use in programming the chip. Applications already developed include wireless coding/decoding, video processing, and encryption. The KiloCore is particularly attractive for scientific data applications and data centre record processing; as such tasks require the processing of large amounts of parallel data.