New features - multidisplay support, NVENC, TXAA

Adaptive V-Sync

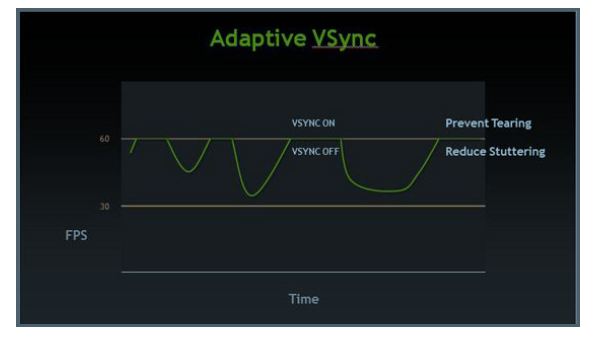

NVIDIA is introducing a feature called Adaptive VSync (AVS). In a nutshell, vertical synchronisation (VSync) marries up the rendering of each frame (image) to the refresh rate of the display, which is usually 60Hz, or 60FPS for the card. Having non-aligned frames and refreshes - this occurs when VSync is switched off - can lead to 'tearing', where the image has noticeable artifacts as the frames are switched at almost arbitrary periods and not in tune with the display.

VSync locks the two together for a silky-smooth image, and is often used on consoles, but problems arise when the frame-rate spikes and drops intermittently. With VSync on, any drop below 60FPS causes the synchronisation to drop to a much lower figure, something like 30FPS. As soon as the frame-rate hits 60FPS it becomes locked again. This is one reason for the micro-stutter you may perceive in games.

One the one hand there's the possibility of non-synchronised tearing, on the other hand, with VSync on, there's the possibility of micro-stutter. NVIDIA is looking to address this problem with Adaptive VSync. Trying to leverage the best of both worlds, AVS has VSync on by default, reducing the possibility of tearing at super-high frame-rates. However, VSync is dynamically switched off as soon as the performance drops below 60FPS, thereby reducing the micro-stuttering.

AVS' settings can be toggled in the control panel, and it's one feature that will find its way to other GeForce cards.

TXAA

A new GPU launch wouldn't be the same without the introduction of a new form of antialiasing. The last iteration of cards brought us Fast Approximate AntiAliasing (FXAA), which is a rather clever technique of getting rid of those cursed jaggy lines. Traditional Multi-Sampled AntiAliasing (MSAA), available on most games, renders the frame at a higher resolution than the one used in the final instance. A number of samples are coloured - the number depending upon how many you take, higher being better - which are then down-resolved into the final pixel. By blending in the surrounding detail on a sub-pixel basis the jagged line is, in effect, blended out. The problem here is two-fold: there's an enormous toll on memory bandwidth as the GPU is rendering to a larger-than-displayed image, and MSAA doesn't play well with multiple render targets, used in advanced effects such as deferred shading.

FXAA gets around the memory-footprint problem by simply focussing on the pixels on the image. This post-processing effect, and the post bit is key here, doesn't require huge bandwidth to run its pixel-shader filter - it happens at the end of the pipeline. Image quality is good, and the GPU cost is minimal for a high-end card. However, its late appearance in the pipeline also means that games developers need to code for it in certain circumstances. For example, because FXAA smoothes everything, a game's heads-up display (HUD) needs to be drawn after FXAA has done its thing:

This explanation is a precursor to Temporal Approximate AntiAliasing (TXAA). This technique looks to bring the best out of MSAA and FXAA, and it works by using the sub-pixel accuracy of MSAA and speed of FXAA. In short, TXAA uses a very high-quality resolve filter - the bit that blends the colour - at the end of the pipeline. TXAA is to be made available in two modes: TXAA1, comparable to 8x MSAA but much quicker, and TXAA2, working at the same speed as 2x MSAA yet producing a better, sharper image than we've seen before. We'll be going into greater detail in a separate review. Note that games engines will need TXAA support. NVIDIA says MechWarrior Online, Secret World, Eve Online, Borderlands 2, Unreal 4 Engine, BitSquid, Slant Six Games, and Crytek have all signed up.

NVENC and multi-display

Righting a wrong in the GeForce GTX 500-series, GeForce GTX 680 ships with four independent display outputs: two dual-link DVI, HDMI and DisplayPort. Previously, one required two GeForce cards to run three screens with the optional 3D Surround in tow. This has now been reduced to one, and NVIDIA bests AMD by enabling three-screen usage without the need to purchase any additional adapters - AMD's clock-gen setup is such that the two DVI and single HDMI ports cannot be used together, though Sapphire, AMD's largest partner, has been offering out-of-the-box four-screen usage - including concurrent HDMI and DVI support - through its FleX range of cards for a while now.

Also following on from Intel's QuickSync and AMD's VCE lead, NVIDIA incorporates a hardware-based, fixed-function H.264 encoder for quickety-quick encoding into the popular format. NVIDIA say this low-power encoder is up to 4x faster than encoding on the cores themselves, as has historically been the case. The company cites an example of encoding a 1080p video 8x faster than real time.

Much like AMD, the cores and NVENC can be combined for even-faster encoding, with the power-hungry cores doing the grunt pre-processing work and NVENC simply tuned for the encoding task. As NVENC is only exposed in certain ways, one will need to purchase a third-party program to take advantage of it. CyberLink's MediaEspresso is one such program.