GPU Boost - something new

GPU Boost

Taking a leaf out of Intel's book, NVIDIA is implementing a frequency-boosting feature called GPU Boost, and it needs some explainin'. NVIDIA's previous GPUs have run at one set speed, determined by the class of card, with, in recent times, the shader-core operating at twice this base clock. Any GPU engineer will tell you that a modern GPU has multiple clock domains, but for our purposes, let's consider GTX 580 to operate with a general clock of 772MHz and shader-core speed of 1,544MHz.

Now, GTX 680 core and shader-core clock is 1,006MHz, but this is not the frequency it will operate at during most gaming periods. The GTX 680 has a dedicated microprocessor that amalgamates a slew of data - temperature, actual power-draw, to name but two - and determines if the GPU can run faster. Just like Intel, NVIDIA wants to boost performance when there is TDP headroom to do so, given conductive temperatures, etc.

You see, not all games stress a GPU to the same degree; we know this when looking at the power-draw figures for our own games. Games such as Battlefield 3 don't exact a huge toll, with the GPU functioning at around 75 per cent of maximum power. Switch to 3DMark 11 and the benchmark is significantly more stressful, enough to hit the card's TDP limit. Point here is that the card's performance can be increased in many games without breaking through what NVIDIA believes to be a safe limit, which, by default, is the 195W TDP.

This PCB-mounted microprocessor polls various parts of the card every 100ms to determine if the performance can be boosted. Should there be scope to do so, as explained above, the GPU boosts in both frequency and voltage, along pre-determined steps, though the process is invisible to the end user. After examining numerous popular real-world games, NVIDIA says that every GTX 680 can boost to, on average, a 1,058MHz core/shader speed, with the GPU running up to 1,110MHz for certain titles. As an example, the GPU boosts to 1,084MHz with 1.150V and 1,110MHz with 1.175V: the increase is tied to a voltage jump, too.

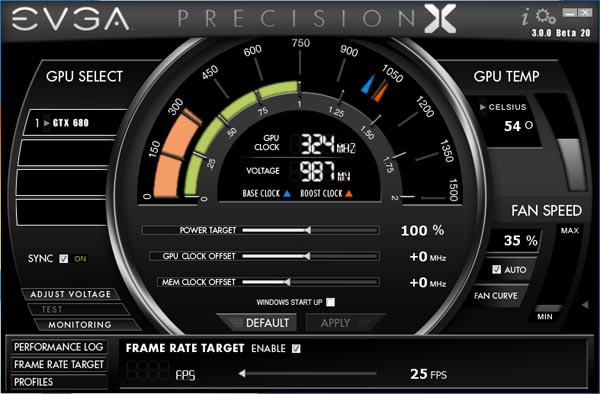

The only method of discerning the clockspeed is, currently, to use EVGA's Precision tool, which provides an overlay of the frequency. The two pictures show the GTX 680 operating at 1,097MHz and 1,110MHz for Batman: Arkham City and Battlefield 3, respectively. This behind-the-scenes overclocking also means that two cards, in two different systems, can produce results that may vary by more than we'd like: a benchmarking nightmare.

You may suppose an easy way around this is to switch off GPU Boost. NVIDIA, however, says that GPU Boost cannot be switched off: the GPU will increase speed no matter what you do. We'll leave it up to you to decide if this is a good move or not.

Going down

Working on a pre-determined curve that marries voltage with frequency, GPU Boost can also reduce the core/shader speed and associated voltage when there's no reason to run at 1,006MHz. The aforementioned chip monitors game load and should it determine that a low-intensity scene doesn't benefit from the GPU running at its nominal 1,0006MHz clock, the core frequency and voltage is reduced. This also means power is reduced, as well. Clever, eh? Again, though, you won't know it's happening.

Manual overclocking

With all this auto-overclock malarkey, how is the honest-to-goodness enthusiast supposed to overclock the card, and what are partners to do if they want to release custom-designed, factory-overclocked models?

Well, one has to use an approved overclocking utility and manually increase the GPU offset. Doing so shifts the frequency goalposts. Add on 50MHz and everything is shifted 50MHz up, including potential GPU Boost frequencies. The EVGA utility provides an improbable 549MHz offset. Remember the speed/voltage curve we alluded to earlier? Manual overclocking merely serves to put the GPU on a different, higher speed/voltage plane. Though it may be abundantly obvious from the commentary, GPU Boost doesn't impact upon the memory speed; this has to be raised manually.

Yet there is more than one way to skin this particular overclocking kitty. Think back to how the GPU overclocks: it uses the 'spare' TDP and boosts the core/shader frequency accordingly. Manually increasing the clocks helps, sure, but if there is no TDP available to force the higher-than-default clocks, the GPU Boost feature is compromised. This is why NVIDIA also has a Power Target feature, which increases the TDP level to, potentially, 32 per cent above default.

Now we're at an interesting juncture, because one can overclock at least three ways. Keep everything at default speeds and raise the Power Target and the GTX 680 should boost to higher speeds - remember, there is more TDP headroom now. Conversely, one can raise the GPU offset and force the extra frequency, though it may not take effect because of limitations in TDP. The third way, one of concurrently raising the Power Target and GPU offset is the best method; it gives the GPU TDP headroom to breathe and, thus, any offset is likely to be realised. Phew, hope you got all that!

Frame-rate control

We've written ad nauseam about how granular this GPU Boost technology is. It is the conductor of this particular GTX 680 frequency orchestra. Such control is also manifested by a super-cool function called Frame-rate target. Click enable and set the desired in-game setting between 25-65FPS and away you go. This function locks the frame-rate at the desired level, give or take an FPS, and it's really rather useful if, for example, the game you play on a GTX 680 produces super-high frame-rates that aren't necessarily needed.

Reduce the frame-rate to, say, a locked 50FPS and the card should, via GPU Boost, knock down core and voltage, leading to a quieter, more power-efficient gaming experience.

As an example, we manually inputted a desired 25FPS (purely for exposition purposes) and the GPU throttled down to 589MHz core. The game's pretty smooth and the at-wall power-draw is just 100W. Here, then, frame-rate determines power, not the other way around.