Power optimisations

Thinking back to the Zen architecture itself, AMD has endowed it with thousands of sensors that monitor temperature, voltage, power and frequency. Each of these is plumbed into algorithms that then set the optimum balance between frequency and speed at any given point in time. This is usual for modern processors, but where AMD says it differs is with respect to the granularity and speed of the on-the-fly changes.

The reason we mention this is because such precise adjustments are far more valuable in a server space where shaving off a few watts here and there from a rack can add up to significant power savings across the datacentre, because even with all the other components present in a box - memory, NIC(s), HDD, fans, etc. - the CPU(s), understandably, consume over 50 per cent of the total power of a standard, GPU-less 2P server.

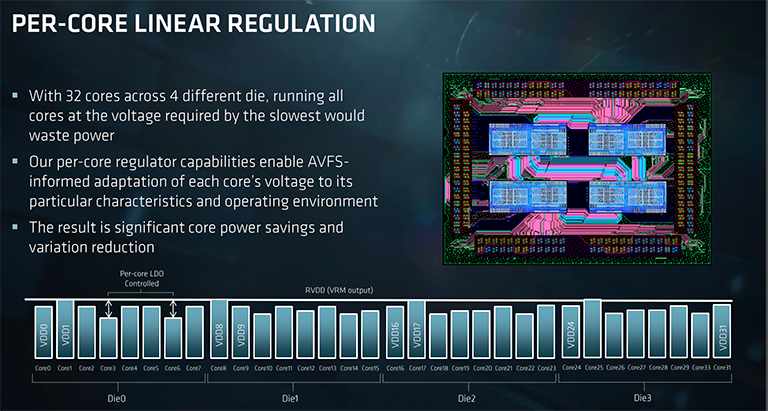

Therefore, each of the up to 32 cores within Epyc has its own regulator that governs voltage for a given frequency. As you would expect, silicon doesn't have perfectly even characteristics across the die, meaning that some cores will require higher voltage than others to run at a particular speed. Using the latest adaptive voltage frequency scaling (AVFS) AMD reckons that each core's voltage can be tuned to within 2mV for the desired frequency, or with more granularity than the regulator itself allows.

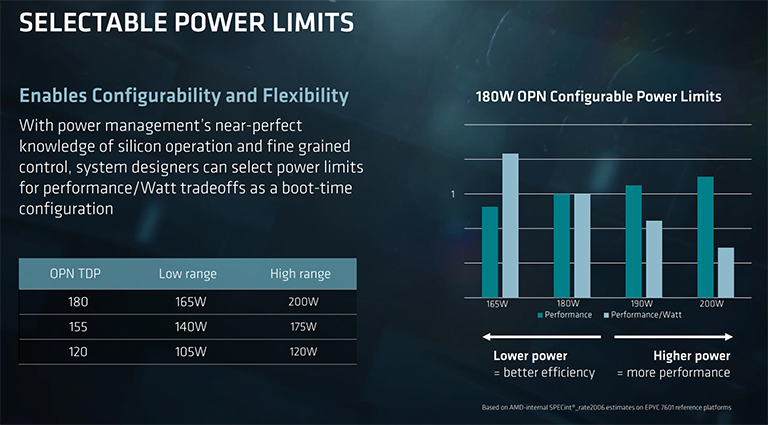

Interestingly, the TDPs quoted on the first page, ranging from 120W through to 180W, are configurable through the BIOS for an OEM with enough knowledge of cooling. Take the top-bin Epyc 7601 as an example. Where power consumption is less of a concern, that chip can be hiked to 200W with the extra energy driven towards higher speeds and voltage. Of course, the gains will be limited, because AMD already has an optimum speed/voltage curve, but it's possible to eke out that bit more performance.

The same chip can be driven at a lower voltage/speed in order to increase the performance-per-watt metric in power-constrained environments, too, and this is why each processor has a range. Final boost speeds will depend upon just how this configurability is adopted, but you can have a reasonable range of curves for each chip.

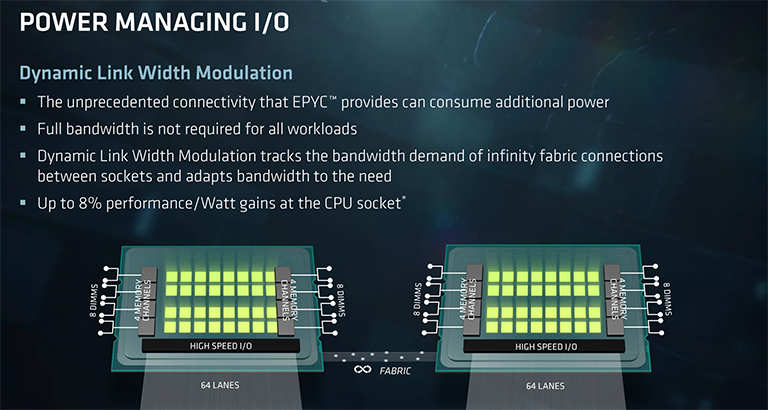

Remember that aggregate 128-link Infinity Fabric interconnect between chips in a 2P environment? Lighting up 150GB/s of data traffic doesn't help with respect to power consumption, understandably so in cases where the workload is far more bound by compute, this link dynamically reduces speed and voltage in order to save power and then reinvests it into more per-chip compute. It's an obvious way of ensuring that each watt is used sensibly.

Security

No exposition of a modern server processor would be complete without touching upon security.

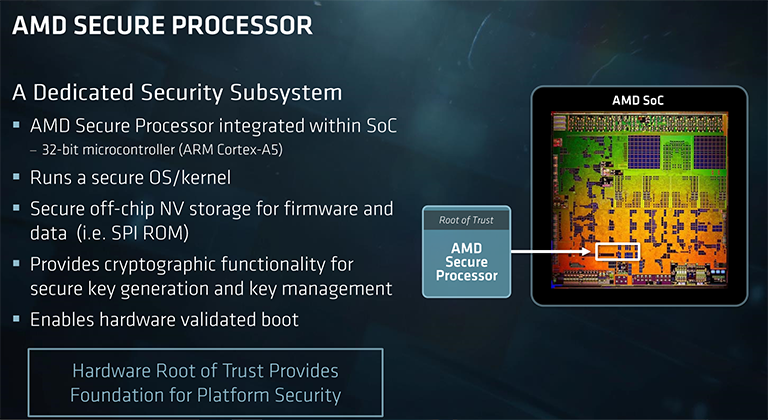

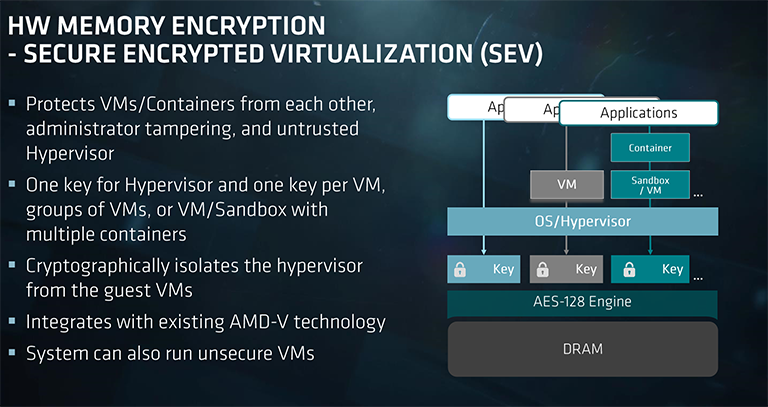

AMD adds in an ARM-based Cortex-A5 Secure Processor within the silicon of every Zen core. This little chip's job is to provide hardware-based support for two new technologies called Secure Memory Encryption (SME) and Secure Encrypted Virtualisation (SEV).

SME offers real-time memory encryption at boot time. It works by marking pages of memory as encrypted through the page-table entries. What this means is that any kind of memory can be AES-encypted to mitigate against physical memory attacks. The memory isn't hidden in any meaningful way, of course, but any snooping or accesses will show it as encrypted. A single encryption key is generated and stored on chip. AMD says that such encryption, run via a couple of AES engines, causes minimal access-latency increases.

Leveraging the increasing number of cores and threads within a modern server means that multiple virtual machines can be run on it. Software known as hypervisors emulate the hardware and enable these virtual machines to function. So, for example, 'your' remote server may be one of a number of servers, virtualised, and run on a single physical machine in the cloud. Ensuring that VMs are protected from compromised hypervisors is becoming increasingly important.

This is where the SEV technology comes in, according to AMD. SEV encrypts parts of the memory shared by virtual machines - this is your 'part' of the server - and issues a unique key to each VM. The point is, compromised hypervisors know that multiple guest VMs are running but are not able to access their contents because their memory is cryptographically isolated.