Introduction

Solid-state drives (SSDs) are one of the best PC innovations of the last decade. Near-instant access, noiseless operation and low energy consumption drive a better user experience in various form factors.

Concentrating on the home PC for a moment, most SSDs are presented in a 2.5in form factor and connect to the system via the SATA 6Gbps interface. Other formats are available, including mSATA and nascent M.2, but regular SATA continues to hold sway for the majority of drives.

But there are two problems with using SATA for the long term. The first is a lack of bandwidth imposed by the interface, topping out at a usable 550MB/s or so. The second is the transfer protocol used to communicate between drive and system host controller, which is predominantly Advanced Host Controller Interface (AHCI).

Now, while AHCI has been a good fit for mechanical drives and first-generation SSDs connecting to a host computer, it is preferential for newer, faster drives to interface with the motherboard through the PCI Express bus, either via a standard expansion slot or riding on the back of the new M.2 conduit. AHCI, in this regard, isn't as efficient as drive manufacturers would like, suppressing the ability of modern SSDs. Enter NVMe.

NVMe to the PCIe rescue

The Non-Volatile Host Controller Interface Specification (NVMe, or NVM Express) is purposely designed to exploit the naturally low latency and greater inherent data-transfer parallelism available for drives running off the PCIe bus.

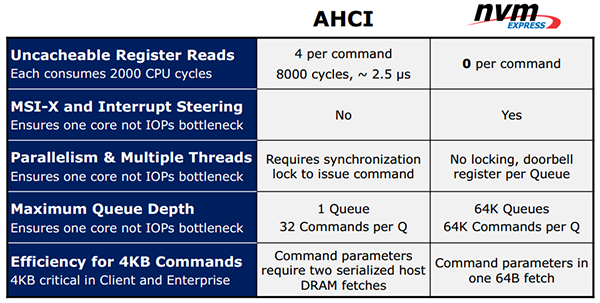

This positive exploitation of an SSD's latency/parallelism qualities is manifested by the NVMe protocol enjoying a much larger queue depth, fine-grained interrupt steering, and improved efficiency through abolishing the need to lock threads before issuing commands and ability to receive command parameters - the work needed to be done - in one 64-byte fetch instead of two with AHCI.

The following table briefly explains the benefits of NVMe vs. AHCI as it pertains to PCIe-based SSDs. Enough acronyms already!

Having much larger queue depths enables today's multi-core CPUs to remove the potential IOPS bottleneck imposed by using a single core, which is how AHCI predominantly works. For storage-intensive tasks it's pointless to waste the potential provided by the latest Intel and AMD CPUs, much in the same way as older games fail to engage multiple processor cores for smoother rendering.

Bypassing thread locking and eliminating what is known as uncacheable register reads per command provides for lower overall latency and overhead, pushing up the number of potential IOPS available to the system. NVMe, in a nutshell, offers a means for the SSD controller and flash to work at peak speeds for longer periods.

Operating support is baked into Windows 8.1 while the appropriate drivers have been added to Windows 7 through updates. Linux, FreeBSD and the Chrome OS have NVMe support, as well.

Does NVMe work on my computer?

NVMe drives, either standard PCIe or M.2, can be used as bootable media if the motherboard's BIOS supports the correct form of UEFI implementation. Intel, for example, says that motherboards with a UEFI 2.3.1, or later, BIOS should recognise an NVMe drive just fine, though it recommends using Windows 8/8.1 for the best compatibility.

In the consumer space, MSI has already stated that, with a BIOS update, all of its X99/Z97/H97 boards support NVMe devices under both Windows 7 64-bit and Windows 8.1 64-bit. Asus, too, lists support for these new drives and, unlike others, on selected boards - X99 Sabertooth TUF being a good example - offers what it calls a Hyper Kit, where an M.2 card connects with an NVMe-supporting mini-SAS (SFF-8639) port on the other side. The purpose here is to use four-lane PCIe with a new breed of SATA drives featuring, you guessed it, NVMe support.

I'm sold: which drives support NVMe, then?

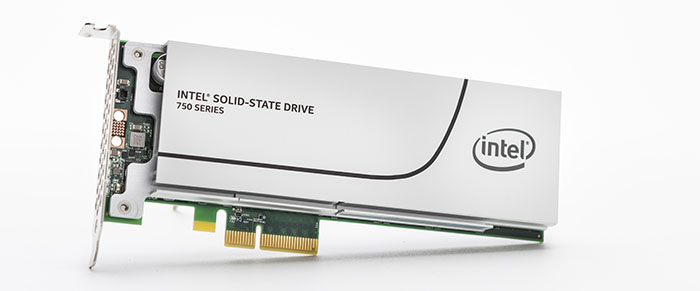

The primary differences between NVMe drives and the older AHCI are form factor and the need to house an NVMe controller on the device side. Marvell and SandForce are two of the bigger names who plan to launch NVMe-compatible controllers in the near future. Another is Intel, whose SSD 750 Series drives are launching today in 400GB and 1.2TB capacities, following on from the enterprise-specific P-series released last year.

These two new Intel SSD 750 drives are to be made available in a half-height PCIe Gen 3 x4 add-in card and, alluding to the Asus X99 TUF discussion earlier, via 2.5in drives cabled via SFF-8639 through to a full-bandwidth M.2 slot on the motherboard via the aforementioned Hyper Kit.

It's important to appreciate that NVMe's performance boost means that maximum performance is only achieved once the drive is connected directly to the CPU via the PCIe Gen 3 x4 interface. This is no problem on the X99 chipset as the expansion lanes are wired to the CPU, but may cause bandwidth problems on cheaper Z97/H97 boards that attach via the PCH and then through DMI - if interested in these new drives, do lookout for boards that promise full-bandwidth M.2 and PCIe access.

SSD 750 1.2TB PCIe

Intel provided us with the half-height PCIe Gen 3 x4 add-in card SSD 750 1.2TB for performance evaluation.

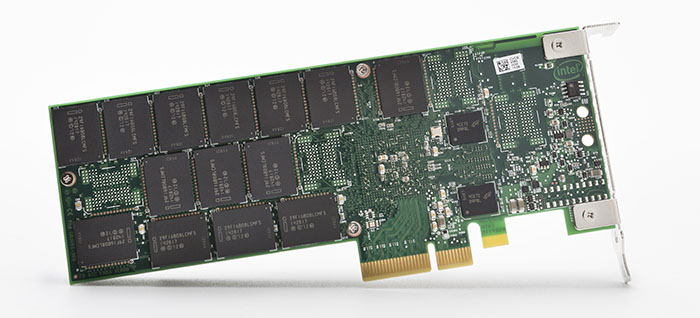

Storage aficionados will instantly recognise the familial similarity between the enthusiast- and workstation-orientated SSD 750 and its older, bigger, more expensive cousins, the P3700, P3600 and P3500 SSDs. The trio of drives use the NVMe conduit, are available in 2.5in (SFF-8639) and add-in card form factors, and, optimally, connect to the motherboard via a PCIe Gen 3 x4 interface. This last point is important because it provides a usable 3,200MB/s of bandwidth, up from the 550MB/s of SATA.

The aluminium heatsink comes in useful considering the drive typically pulls up to 25W when in full-on writing mode, dropping to 4W in idle. These numbers are higher than on regular SATA SSDs, but with good reason - the extra speed and number of chips elevate energy consumption by a reasonable degree.

Inside, too, the similarities exist, particularly with the P3500. An Intel-engineered 18-channel flash controller, operating at the same 400MHz as the enterprise drives, runs in concert with 20nm MLC flash born from the collaboration between Intel and Micron.

Intel quotes an endurance rating of 219TBW (Terabytes Written) for the 1.2TB model. While impressive in its own right, equating to 120GB per day over the five-year warranty period, this crucial metric is considerably lower than the 3,600GB per day on the same-capacity P3600 drive. Why? That enterprise drive uses flash imbued with High Endurance Technology (HET).

Intel SSD 750 NVMe drives |

||||

|---|---|---|---|---|

| Model | 400GB |

1,200GB |

||

| Controller | Intel 18-channel, 400MHz |

|||

| NAND | 20nm IMFT MLC NAND |

|||

| Interface | PCIe Gen 3 x4, NVMe 1.0 |

|||

| Sequential Read Speed | up to 2,200MB/s |

up to 2,400MB/s |

||

| Sequential Write Speed | up to 900 MB/s |

up to 1,200 MB/s |

||

| Random IOPs (4KB Reads) | up to 430K IOPs |

up to 440K IOPs |

||

| Random IOPs (4KB Writes) | up to 230K IOPs |

up to 290K IOPs |

||

| Power Loss Protection | Yes |

|||

| Available Form Factors | half-height PCIe card and SFF-8639, 2.5in |

|||

| Active Power Consumption | 25W Typical |

|||

| Idle Power Consumption | 4W Typical |

|||

| Life Expectancy | 1.2 million hours MTBF |

|||

| Endurance | up to 219TBW |

|||

| Warranty | 5 Years |

|||

| Current Retail Price | £300 |

£800 |

||

Entry into the NVMe world isn't cheap, mind, but as the SSD 750 is able to obliterate regular drives in both sequential and random IOPS throughput, enthusiasts who need, or can afford, top-drawer performance will understand that such performance prowess exacts a large premium.

The 1.2TB drive doesn't mess around. Sequential performance of 2.4GB/s read and 1.2GB/s write is impressive enough, but what's probably more important is the high IOPS rating on both fronts, made possible, in part, via NVMe.