Introduction

We finished off the GeForce GTX 1080 FE review with fulsome words of praise for Nvidia's best-ever consumer GPU: 'Want the best consumer graphics card in the world? The GeForce GTX 1080, in no uncertain terms, is it.' That statement is now redundant and inaccurate because GTX 1080 is no longer the champ. It's been right-hooked to the canvas and is out for the count. Has AMD released a monster GPU under the radar, then? Afraid not.

Rather, Nvidia has cut the silicon legs off the GTX 1080 by releasing another Pascal-based GPU that's bigger, beefier and plain faster. Enter the Titan X. Described by Nvidia CEO, Jen-Hsun Huang, as The Ultimate, it is the card to own... if you can afford the $1,200 price tag.

The new GPU is referred to simply as Titan X - but there's GeForce branding on the side of the cooler. Let's now cut right to the chase and see what makes Titan X tick.

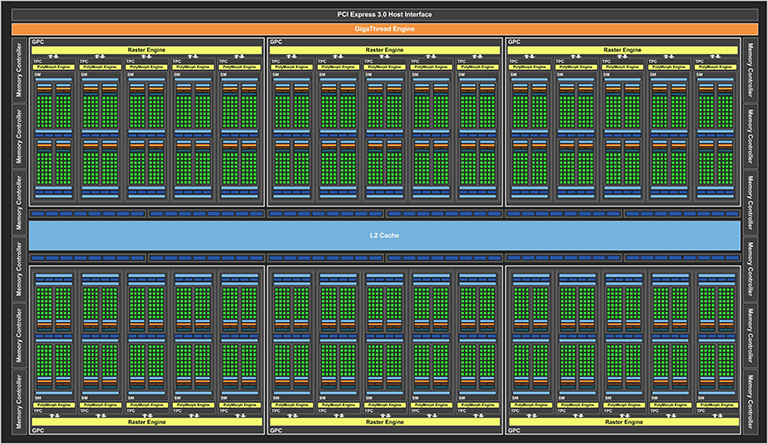

The full-fat GP102 die, not completely used on Titan X Pascal

Above is the full implementation of the GP102 Pascal die the Titan X is based on. You haven't seen this precise die before, because the original big-chip Pascal, known as GP100, though ostensibly similar, is larger due to the use of different memory.

GP102 is the consumer version of GP100, and it, too, calls for a maximum of 30 SMs each containing 128 processors, or 3,840 in total. Like its Tesla counterpart, Titan X has 28 of those 30 SMs active, thus reducing the core count by 256 (2x128) and a commensurate reduction in texturing and per-SM goodness. Why? Nvidia doesn't provide a clear-cut answer, but our guess would be that activating those two additional SMs reduces yields by an unacceptable degree.

What you do have is 3,584 processors, 224 texture units, 96 ROPs, and a now-familiar GDDR5X back-end operating at at GTX 1080-matching 10,000MHz. Tesla P100, as you may know, uses HBM memory instead. Next question is how Titan X fits into the present ultra-premium hierarchy.

Titan X |

GeForce GTX 1080 |

GeForce GTX 1070 |

GeForce GTX 980 Ti |

GeForce GTX Titan X |

|

|---|---|---|---|---|---|

| Launch date | August 2016 |

May 2016 |

May 2016 |

June 2015 |

March 2015 |

| Codename | GP102 |

GP104 |

GP104 |

GM200 |

GM200 |

| Architecture | Pascal |

Pascal |

Pascal |

Maxwell |

Maxwell |

| Process (nm) | 16 |

16 |

16 |

28 |

28 |

| Transistors (bn) | 12 |

7.2 |

7.2 |

8.0 |

8.0 |

| Die Size (mm²) | 471 |

314 |

314 |

601 |

601 |

| Core Clock (MHz) | 1,417 |

1,607 |

1,506 |

1,000 |

1,000 |

| Boost Clock (MHz) | 1,531 |

1,733 |

1,683 |

1,076 |

1,089 |

| Shaders | 3,584 |

2,560 |

1,920 |

2,816 |

3,072 |

| GFLOPS | 10,962 |

8,873 |

6,463 |

6,060 |

6144 |

| Memory Size | 12GB |

8GB |

8GB |

6GB |

12GB |

| Memory Bus | 384-bit |

256-bit |

256-bit |

384-bit |

384-bit |

| Memory Type | GDDR5X |

GDDR5X |

GDDR5 |

GDDR5 |

GDDR5 |

| Memory Clock | 10Gbps |

10Gbps |

8Gbps |

7Gbps |

7Gbps |

| Memory Bandwidth | 480 |

320 |

256 |

336 |

336 |

| Power Connector | 8-pin + 6-pin |

8-pin |

8-pin |

8-pin + 6-pin |

8-pin + 6-pin |

| TDP (watts) | 250 |

180 |

150 |

250 |

250 |

| Launch MSRP | $1,200 |

$699* FE |

$449* FE |

$649 |

$999 |

Specification analysis

We have placed the Titan X next to the base specifications of the nascent GTX 1080. What we see is a 40 per cent increase in shaders/texture units/per-SM power. Helping feed the best is a new, 384-bit memory controller that provides a natural 50 per cent uplift in peak bandwidth over the GTX 1080. And there's 50 per cent extra memory, as well, keeping the 12GB tradition of the recent Titan line intact.

Fitting all the extra performance into the core means the GP102 die balloons out to 471mm² on the 16nm process. Sounds big, right. It's actually comfortably smaller than the 561mm² present on even a GTX 780 of 2013. Using an itty-bitty manufacturing process enables Nvidia to put 12bn transistors in for good measure. These are big numbers. Another one is that Titan X is the first consumer GPU to have more than 10TFLOPS of single-precision performance.

Nvidia's engineers did a good job in getting Pascal's core speed up to impressive levels. The big-chip GP102 is still fast by modern standards - 1,531MHz peak - but gives up frequency to the GTX 1080. So it's wider in design but slower on the cores. A back-of-the-envelope calculation suggests Titan X should benchmark at around 30 per cent higher than a standard GTX 1080, give or take, and it's substantially more rapid than the previous Titan X based on the Maxwell architecture.

The performance difference between a decent partner-overclocked, custom-cooled GTX 1080 and this Titan X is going to be smaller, of course, and common sense suggests in-game benefits will only be felt at a 4K resolution.

Adding cores increases power consumption, yet Pascal's energy efficiency is such that Titan X is offered in a standard 250W thermal envelope, so this means an 8+6-pin power arrangement.

Then we come to price. Costing a cool $1,200 (£1,099) and available from Nvidia directly, with no plans for partner-built cards, this is most definitely not a value proposition in any reasonable sense of the word. Nvidia doesn't want it to be, frankly, because Titan X epitomises the absolute cutting-edge of performance. Can you build a faster graphics subsystem through the use of SLI? Most likely. Is $1,200 an enormous amount of money for a single card? Yup. Crucially, is there a market for those that want the absolute best and have the means and will to pay for it? Most definitely, and it's this customer that's already in the Titan X wheelhouse.

Much like the sky-high price of the Core i7-6950X, this is an aspirational product that isn't intended for those of you whose budget stretches to the GTX 1070 or, at a push, GTX 1080. This is a GPU for a four-grand system, or more, so whilst we could decry that a GPU of this size should be cheaper and how Nvidia is charging a hefty premium, et al, that's entirely missing the point of reality - one that says this graphics processor has no performance peer and there are folk who can readily afford it.

And on a different tack, Titan X runs double-precision apps at 1/32th of SP - the same as previous Titan X - so 343GFLOPS in this case. Nvidia is also aiming this particular GPU as much to the scientific community - deep learning in particular - where budget is less of a constraint. But whichever way you cut or rationalise it, $1,200 is some serious change.

Right-o, that's pricing out of the way. We'll take a peek at the card and then get to the benchmarks.