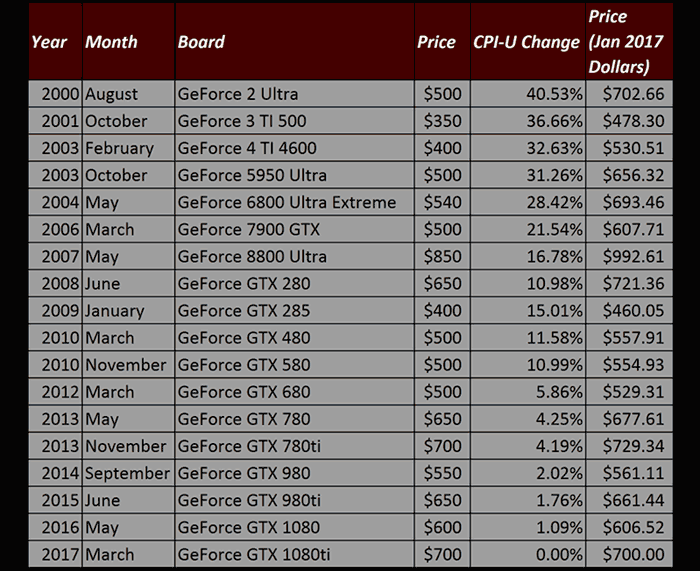

Just ahead of the weekend HardOCP contributor, Zarathustra, published a pricing table comparing all the high end consumer graphics cards released by Nvidia since the year 2000. The table is interesting because it records Nvidia's launch pricing of flagship (Non Titan) graphics cards aimed at gamers alongside the price adjusted using the US Bureau of Labour and Statistics published values for the Consumer Price Index (CPI-U). Yes, they are basically inflation adjusted prices.

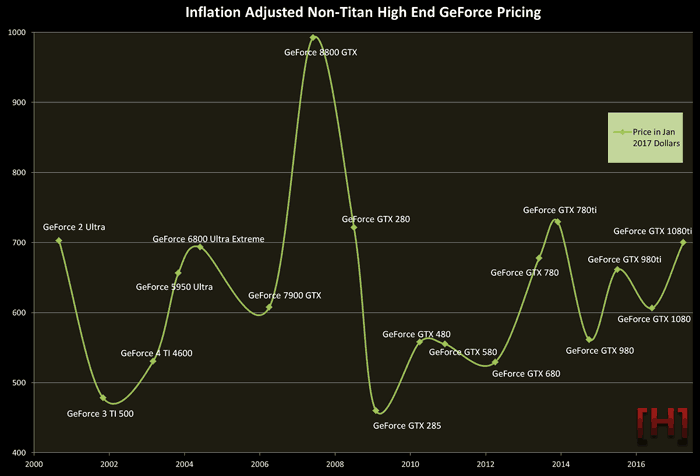

Putting the table results into perspective, it does seem like Nvidia high-end graphics card launches are often met with cries of 'price gouging' and similar emotive language about the pricing set by Nvidia. First of all we must remember that the flagships are premium and / or halo products for a range of graphics cards. Secondly, the graphical historical chart below, again by Zarathustra, plots the inflation adjusted prices in an easy to assess green wiggly line which indicates that the newly launched GeForce GTX 1080 Ti is "pretty much in line with where NVIDIA has typically been".

It is noted in the original report that Nvidia pricing of its flagships over the years has fluctuated significantly, even with the inflation adjustments. Neatly, the year 2000 GeForce 2 Ultra (pictured below) and the brand spanking new GTX 1080 Ti look to be the 'same' price. As anyone with a faint grasp of economics might have guessed, even without this data, the years where Nvidia priced its flagship most competitively were the years in which it faced "higher competition in the market". Let's hope for a downswing in the green line graph caused by fierce competition in 2017-18.