The power management talk was tasked with focussing on ways to reduce overall system power which firstly helps to solve the heat and cost problems, and also with the help of dual-core and new process technology, allows you to pack in more computing power into the same spaces we're using now.

Their first demonstration used two almost identically configured Xeon systems, one using DDR-II memory, the other using DDR, in a large density. The DDR-II-equipped system had a power draw some 100W less than the same DDR-equipped system. Spread over a large number of machines in your organisation and just the switch in memory types can save you lots of money and help with thermal design issues and other heat-related problems.

The second method of power saving discussed was the move to even more advanced processes. Intel propose to swap to 65nm process technology for the creation of all their major microprocessor line by the end of 2006, and 65nm brings with it a few new benefits in terms of power consumption and heat output. While 90nm has taken some flack for being power hungry and hot, at least in Intel's Prescott Pentium 4 and Xeon-based processors, 65nm seems to be much better.

Intel's latest low-power transistors and, perhaps crucially, the use of low-k dielectric materials in the process will help reduce current leaking through closed gates and therefore reduce heat output and power consumption.

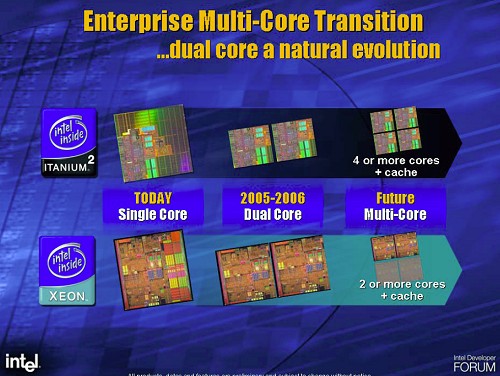

More multi-core, modularisation and Silvervale

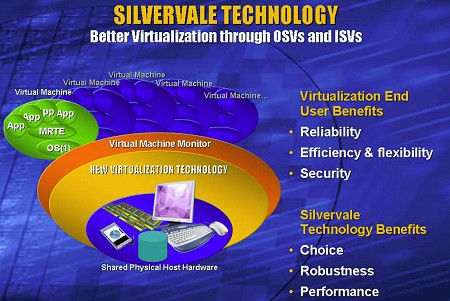

Multi-core was again on the agenda, this time combined with Silvervale, Intel's virtualisation technology or VT for short, one of Intel's big Ts (their umbrella term for things like CMT, EM64T, HT). Silvervale is Intel's technology that allows computer systems to be partitioned, allowing multiple operating systems to run on the same physical hardware. Sounding similar to technology some of the other big-iron vendors have been utilising for many a year, Silvervale aims to reduce the software latency inherent in technologies like Silvervale, seen when the host software has to manage the virtual servers running on the hardware

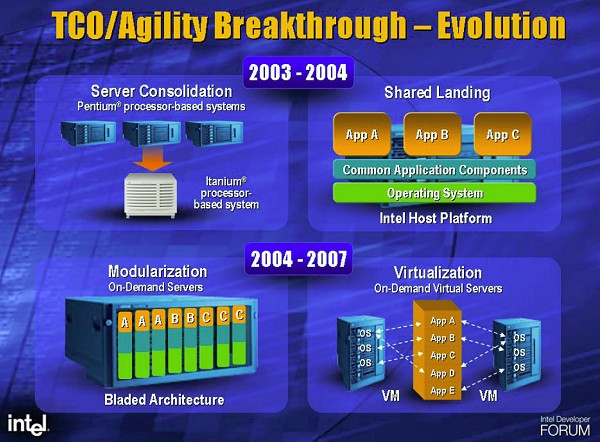

Modularisation is also a big deal to Intel. Think of blade systems as a platform where as much computing power as possible is packed onto a compact form factor and used modularly in high performance computing systems, to create standarised multi-processor, multi-node systems from as little as one blade and as many as thousands.

To that end, Intel have worked hard to move Itanium and Xeon to the blade server form factor. The result is that Intel now power 57% of the top 500 supercomputer list, most of which are build using Intel-powered blades in common rack infrastructure. That's up from a 3% standing on the list when it was first created.

Itanium

Itanium takes a lot of hits in the online press but Intel have seen 300% growth year on year in sales, with 1000% growth in systems packing in 64 or more processors. You can now get Itanium in blade server packaging and they said that more and more HPC system builders are looking to Itanium due to that.The net effect?

New processes that allow Intel to make CPUs that have less heat, pack more CPU power into a single die, more dies into a CPU package and therefore more CPUs into a single system, means that computing power can be consolidated back into fewer numbers of machines. Intel hopes this will help companies reduce total cost of ownership on their big-iron hardware.